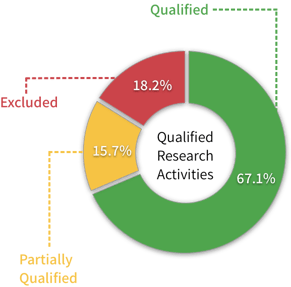

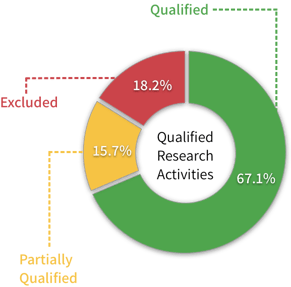

Then RetroacDev totals up the the time an employee spent on activities of each type to calculate the total percent of time they spent on activities that qualify as research under the IRS rules (example shown below).

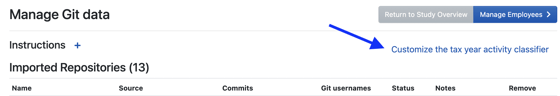

Historically, we've used a set of rules to categorize each task. Starting a few months ago, we let you edit those rules for each R&D study, on the manage Git data page (screenshot below). Now, the new AI classifier is the default.

How does the AI classifier work?

The RetroacDev engineering team created a set of "training data" by having our software engineers go through hundreds of real Git commits and manually classify each one. When you run the AI classifier, it uses a machine learning algorithm to compare every new commit to the ones in the training dataset, and decides which it's most similar to. The classifier will become more accurate over time as it acquires more data to learn from.

"After we got a good look at the results, we quickly saw that the AI classifier is more accurate than the older rule-based classifier."

Evaluating AI models... using a process of experimentation :)

We tested several machine learning models to compare results before landing on one we felt best met the use case. While building machine learning into our Qualified R&D Activities classifier originally began as a project we felt might increase the marketability of our data-driven R&D Study automation platform, we quickly saw that the AI classifier is also more accurate than the older rule-based classifier, which is why we're making it the default classifier for all new studies.

For now, the AI classifier only works on Git data. If you're doing an R&D study based on project management data (from Jira, Trello, Pivotal Tracker, etc.), the rule-based classifier is still the only option. And don't forget, the rule-based classifier can now be customized to work the way you want it to. We hope to roll out an AI classifier for project management data soon.

If you want to see a demo, or have questions about how the AI classifier works, or how to present it to clients, please get in touch - we're more than happy to help!